Klingemann applies deep learning techniques in an attempt to discover new forms of aesthetics - and blur the lines between human and machine creativity. He has used image-focused neural network architectures since the release of Deep Dream.

Style transfer, ppgn, pix2pix and [CycleGAN] are architectures he has investigated and experimented with in an artistic context. Klingemann showed several of his “Neurographer” works at Ars Electronica 2017.

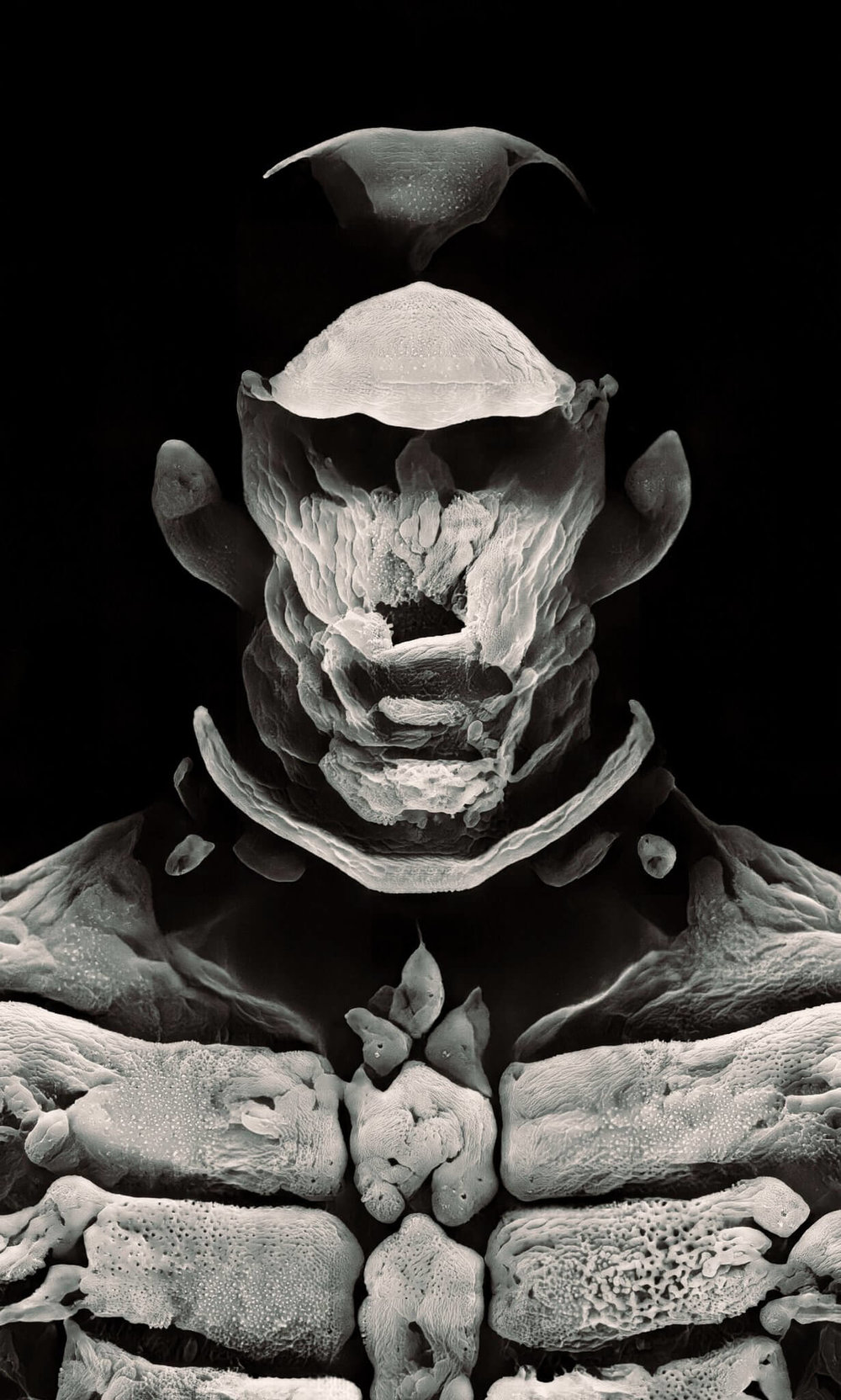

[CycleGAN] The CycleGAN is a technique that involves the automatic training of image-to-image translation models without paired examples. The models are trained in an unsupervised manner using a collection of images from the source and target domain that do not need to be related in any way.

Klingemann is currently an Artist in Residence at Google Arts & Culture. He also helps institutions like the British Library, the Cardiff University or the New York Public Library with the processing and classification of their vast digital archives. He believes that his future creative agents will require a solid foundation of human knowledge to build upon. Klingemann received the Artistic Award 2016 by the British Library Labs and won the Lumen Prize Gold 2018.

sources https://aiartists.org/mario-klingemann