These images were created by a deep convolutional generative adversarial network (DCGAN) trained on a database of handwritten Chinese characters, made with code by [Alec Radford] based on the paper by Radford, [Luke Metz] , and [Soumith Chintala] in November 2015.

[Alec Radford]

ML developer/researcher at OpenAI Cofounder/advisor at indico.io

ML developer/researcher at OpenAI Cofounder/advisor at indico.io

[Luke Metz]

I am interested in machine learning, robotics, programming language design, electronics, 3D printing, and graphics.

I am currently teaching computers to do cool things at Google Brain.

I am interested in machine learning, robotics, programming language design, electronics, 3D printing, and graphics.

I am currently teaching computers to do cool things at Google Brain.

[Soumith Chintala]

I am a machine learning (ML) researcher and computer programmer. I am currently a Research Engineer at Facebook AI Research.

I am a machine learning (ML) researcher and computer programmer. I am currently a Research Engineer at Facebook AI Research.

The title is a reference to the 1988 book by Xu Bing, who composed thousands of fictitious glyphs in the style of traditional Mandarin prints of the Song and Ming dynasties.

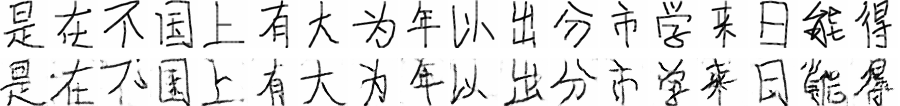

A DCGAN is a type of convolutional neural network which is capable of learning an abstract representation of a collection of images. It achieves this via competition between a “generator” which fabricates fake images and a “discriminator” which tries to discern if the generator’s images are authentic (more details). After training, the generator can be used to convincingly generate samples reminiscent of the originals.

Below, a DCGAN is trained on a labeled subset of ~1M handwritten simplified Chinese characters, after which the generator is able to produce fake images of characters not found in the original dataset.

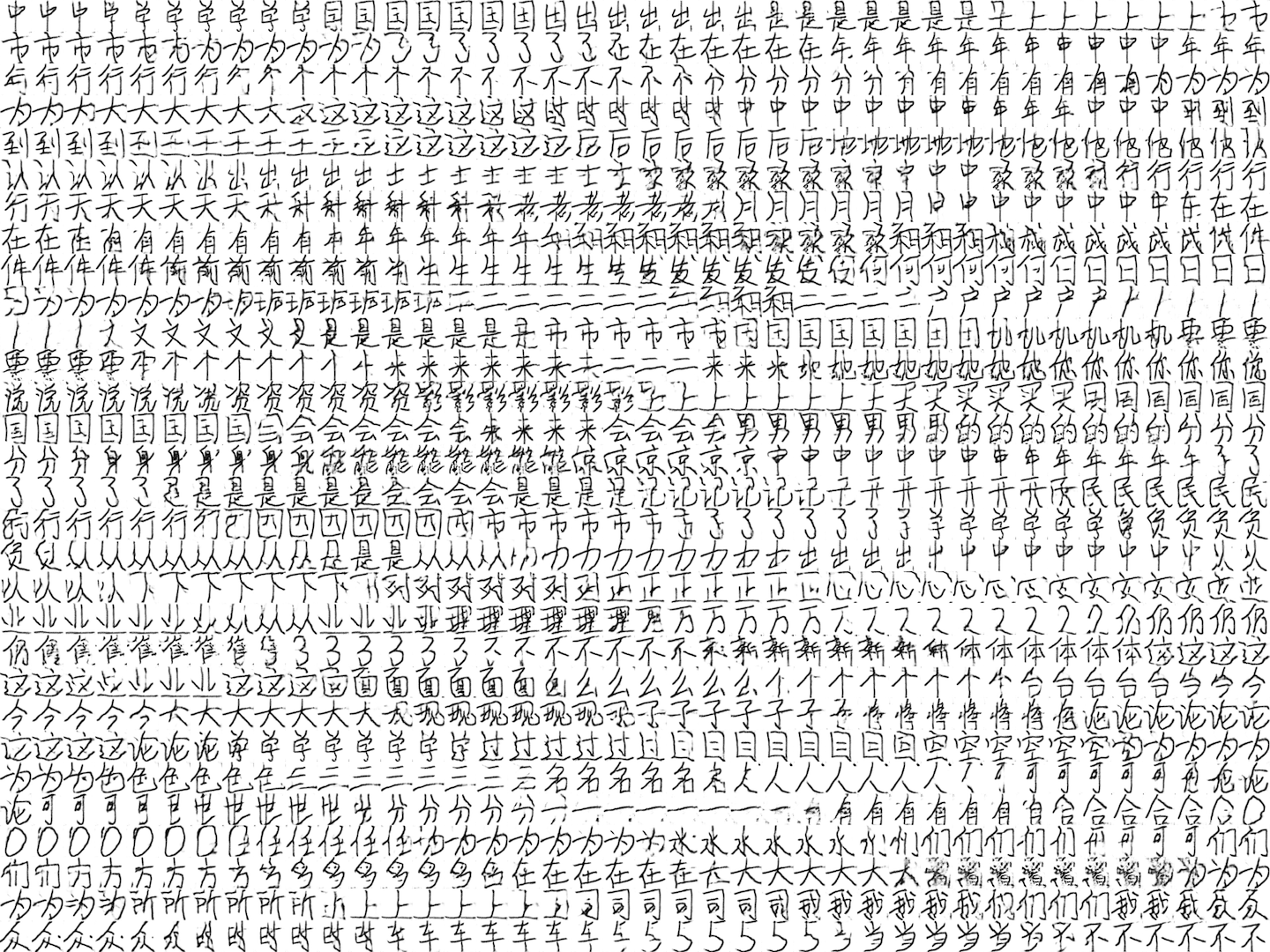

Exploring the latent space

The generator is parameterized by a vector within a high-dimensional latent space, allowing us to peer into its imagination. By traversing this space, we can explore the generator’s perception of possible characters.

Reading between the lines

Rather than simply exploring the neighborhood around individual characters, we can span the latent space between characters as well. By producing samples along a straight line from one character to the next, we get an impression of imaginary characters which are interpolated from in between real ones, perhaps corresponding to semantically intermediate concepts.

Radical interpolation

Chinese characters are comprised of radicals, which are graphical components that serve as the most basic semantic grouping of characters and usually hint at the character’s meaning. For instance, the characters 们 (they), 仔 (youngster) and 以 (with, using) all contain the radical 人 (person), appearing as 亻 in the first two. One of the most striking results was the preservation of radicals across character interpolations. For example, to the lower left is an interpolation through characters 后 (after), 台 (platform), and 名 (name), which share the radical 口 (mouth), highlighted in red. To the lower right, we traverse a sequence of characters sharing the 人 radical. Remarkably, the 人 appears coherent during the transitions, even as it glides into different forms and positions!

Linguistic algebra

An active area of research in computational linguistics is deriving geometric representations of words whose spatial interrelationships closely correlate with their semantic ones. These “word vectors” can be expressed by equations such as − + = , despite having had no prior knowledge of these words’ meanings. Since the DCGAN’s ability to interpolate is just a special case of its more general capacity for combining character classes arithmetically, we can attempt to determine if the above analogy and others also underpin our writing systems. Do the following equations match our visual expectations? Since “queen” is usually not expressed as a single character, we can’t compare.

Below is a matrix of interpolation loops between every pair among the 20 most frequent characters. The software, data, trained model, and code are preserved in this Terminal snap in which the next command will produce a new version of each image on this page. An extended version of this page with more images can be seen here . Thanks to Nick Frisch, Francis Tseng, Tom White, and Cheng-Lin Liu for their contributions, suggestions, and advice.

sources https://genekogan.com/works/a-book-from-the-sky2/